Takeaways on ‘rescuing truth’ from the Social Media Summit @ MIT

Regulation and engagement offer ways forward

Hello! My apologies for sending this out late. This week has been incredibly long and stressful (even for those who didn’t have to watch the two televised Cabinet meetings) and my mind went on strike. We’ll be back to the regular schedule next week.

I’m also trying a more concise (by around 10 words) format for better (and hopefully more) reading. Please let me know if it works.

Welcome again to FightDisinfo, a newsletter on disinformation.

—

One session at the Social Media Summit @ MIT this week gathered four experts on media and disinformation this week to talk in a session focused on "rescuing truth" from disinformation, conspiracy theories, and social media problems that have made dialogue and discourse difficult.

Here are five main points from that discussion.

Facts are facts, and should carry more weight

Social media platforms have made access to and the distribution of content easier but algorithms also mean people have little idea what others they interact with are seeing and what information they are basing their positions on.

"Some things are not debatable. Facts are not debatable and to pretend that they are is leading us down the road to hell," Rappler CEO Maria Ressa said, adding social media platforms have to admit that they are part of the problem so they can help solve it.

Platforms, she said, treat content equally, which disadvantages news and verified information since disinformation has been shown to travel faster, further, and more broadly on social media.

"Fact and fiction, lies and facts are identically treated as data. It's happening in every democracy around the world where social media delivers the news," she warned. "Without facts we have no shared reality. We have no facts, we have no truth, we have no trust."

Clint Watts, a senior fellow at the Center for Cyber and Homeland Security at George Washington University, said facts should stick to the '5Ws'—who, what, when, where, why—and suggested that platforms evaluate the accuracy of outlets and authors over time.

Social media helps make it hard to make power accountable

While media is supposed to hold power to account, social media and information operations on it pose a challenge to doing exactly that since governments can use and have used disinformation to discredit media.

Ressa said Rappler has seen networks of disinformation and the "seeding of meta-narrative, for example, that journalists are criminals."

She says this "pounds you to silence and creates a bandwagon effect so that more people believe" the allegations. The allegations, first on social media, and later from government officials and agencies, have led to a string of cases against the news website.

Ali Velshi, a senior economic and business correspondent for NBC News, said that discussing issues is difficult when "much of the media has been painted [in a way] that suggests you're just lying."

RELATED: Media trust hits new low (Axios)

"When the power you are trying to hold to account is using its power simply to discredit you, not argue or to debate... simply to discredit you, over time, in a society that does not have a shared experience, that grows and that has grown into widespread distrust of media."

That, he says, has led to people having "their own sets of facts."

Regulatory and public pressure works

Camille Francois, chief innovation officer at Graphika, said that when she was looking into governments using "patriotic trolling" to harass their critics and silence journalists years ago, "nobody in Silicon Valley cared, frankly."

In response to alleged Russian influence in the 2016 US elections, Francois said, came a strong congressional and public campaign for social media platforms to step in.

"Now, we kind of have this reality where, of course, they have rules against these sub-types of activity. Of course, they have investigators that systematically go after and find those fake accounts, and, of course, they share the data."

She said issues like disinformation networks are no longer seen as just people being mean on the internet.

Watts cautioned, though, against relying too much on "policing" content since disinformation content is quicker to produce than to check. It also tends to go into telling people what they can and can't say, which would run counter to democratic ideals.

A better approach, he says, would be to "focus on the most prolific offenders over time" and to keep them from spreading disinformation.

RELATED (TO WATTS’ WORK): How Can America Counter Domestic Extremism? (Selected Wisdom)

Include instead of ignore

Velshi said that it is important to welcome people and their ideas "because there is a sense that we invalidate ideas that are not ours."

He said most people don't really subscribe to conspiracy theories but may consume content related to them and may be influenced by them.

He said it would be better to talk about legitimate issues that might be raised "but instead, we're having a discussion that is causing people to act a certain way politically based on falsehoods that they cannot even identify."

"You can't actually ignore them," he also said. "It erodes your base of people who will look to you for information and you end up talking to a smaller and smaller group of people."

Ressa offered one caveat, though: "Real grassroots movements should not be compared to insidious manipulation [but] social media platforms cannot distinguish the difference."

RELATED: Can we all get along to getting along? (This newsletter)

Opinion and bias are not the enemy

Velshi, who said he wants to amplify pluralism, says that people should be able to discuss issues more.

He said one problem is people conflating opinion or bias with reliability. "That's not the enemy, unreliability is the enemy, dishonesty is the enemy, falsity is the enemy"

People should be able to debate and discuss as long as they do it in good faith, he said. "We need to sort of say opinion is fine, bias is fine. Lying is dangerous, ignoring facts is dangerous."

Rappler has a video of the summit if you want to check it out:

—

Elsewhere on the internet

The Philippines has slipped two places on the World Press Freedom Index, with Reporters Withour Borders noting that the government “has developed several ways to pressure journalists who dare to be overly critical of the summary methods adopted by ‘Punisher’ Duterte and his ‘war on drugs’.”

The Palace’s comment: “We see nothing wrong with it.” (BusinessWorld)

A related RSF tracker "at least two journalists are currently facing two-month prison terms for spreading 'fake news' about the COVID-19 crisis" under the Bayanihan To Heal As One Act.

A peek at what content moderators at Facebook go through, and what prompted one to quit. "Content analysts are paid to look at the worst of humanity for eight hours a day. We're the tonsils of the internet, a constantly bombarded first line of defense against potential trauma to the userbase." (SFGATE)

On the other side of that, and on a different platform: A former Alt-Right YouTuber. “We realized that if we wanted a future on YouTube, it had to be driven by confrontation. Every time we did that kind of thing, it would explode well beyond anything else.”

Also, from Princeton: Consuming online partisan news leads to distrust in the media

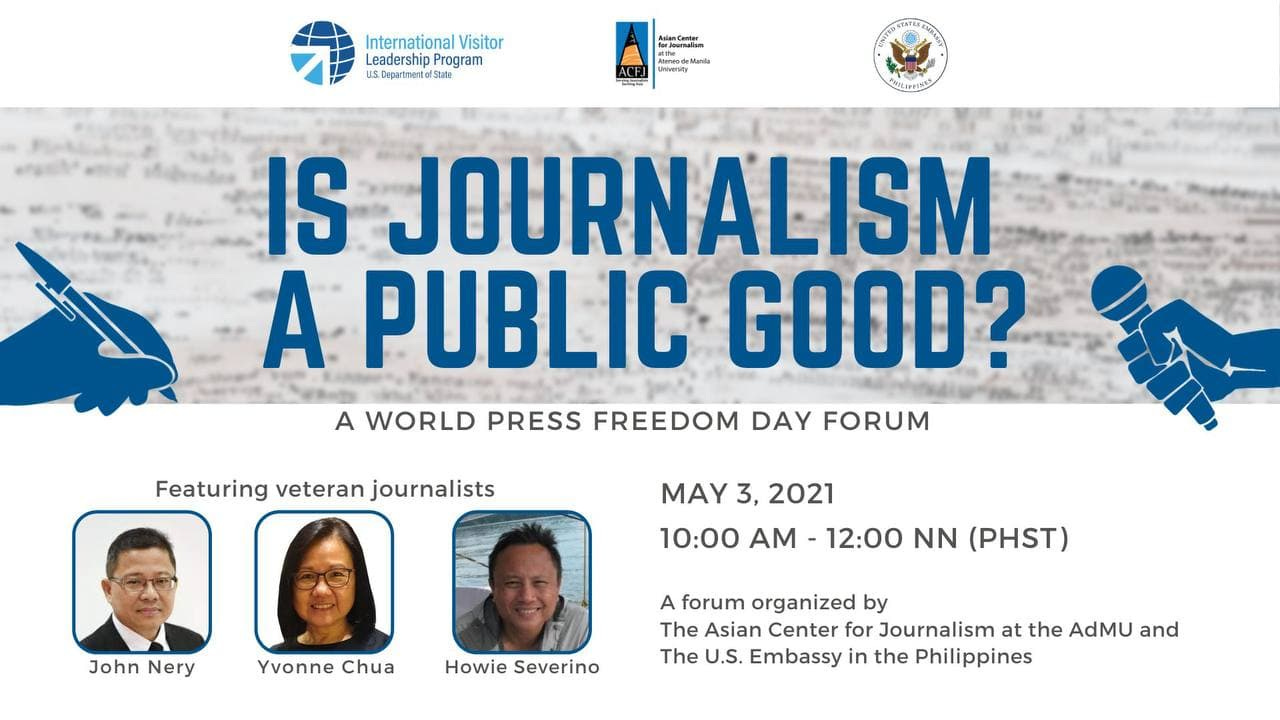

Veteran journalists John Nery, Yvonne Chua and Howie Severino will be at a forum for World Press Freedom Day on May 3 to discuss journalism as a public good.

Registration is through this link.

Elephants may be in the room, but will be on mute.